Artificial intelligence and machine learning have become central topics in the news and in analytical reports. They are discussed in the context of digital services, user scenarios, business efficiency, and even the pricing of RAM and GPUs. AI increasingly feels like an everyday technology available to everyone.

Yet few people stop to ask a simple question: what does all of this actually run on?

In this article, we examine how artificial intelligence is reshaping requirements for data centers and how the industry is adapting to new workloads and a new digital reality.

From 5 to 20 kW: why traditional data center infrastructure is not ready for AI workloads

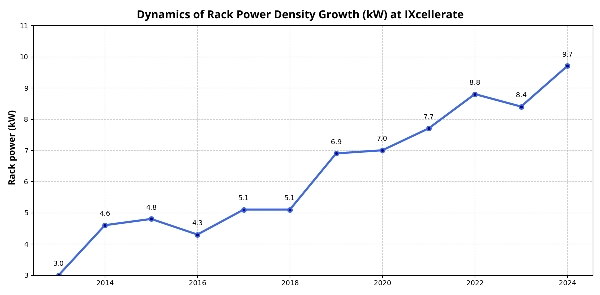

Server power density has been growing even without artificial intelligence. Three years ago, a typical rack required around 5 kW; today, even “standard” enterprise customers increasingly demand 10 kW or more per rack.

This growth is evolutionary and predictable, and data centers have been planning for it in advance.

AI is different. Its development is revolutionary and requires not incremental scaling, but a fundamental rethinking of data center infrastructure.

Let us look at why.

AI workloads differ fundamentally from traditional high-performance computing scenarios. In classic enterprise workloads, demand is relatively predictable, distributed over time, and scales in a linear fashion. AI workloads, by contrast, require extremely high localized compute density and are highly sensitive to intra-cluster latency. As a result, power is concentrated not at the level of the data hall or row, but at the level of individual racks — and sometimes even at the level of specific GPU configurations within a rack.

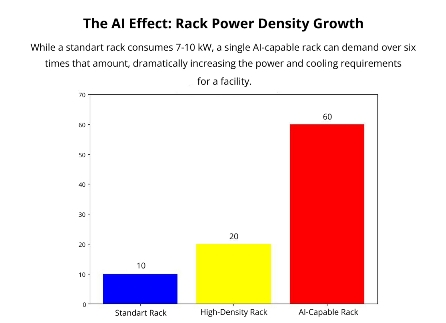

This drives rack-level power requirements up by an order of magnitude, reaching 40–60 kW per rack and pushing data centers into an entirely different class of engineering solutions.

Fewer racks, more power?

Retrofitting existing data centers to support AI workloads is often impractical: there is simply not enough available power capacity. The only short-term workaround is to increase the density of existing racks by reducing their total number.

If, for example, 100 conventional racks are replaced with 10 high-density racks, floor space is freed up while total allocated power remains the same. Those 10 racks would consume the same total power previously distributed across 100 racks. However, this is a temporary solution that does not enable sustainable long-term capacity growth.

Water and power: the key resources for hosting AI

Power constraints are only part of the challenge. Traditional cooling approaches are also reaching their limits. Air-based cooling architectures, which scale well at moderate densities, struggle at high power densities. Retrofitting liquid cooling into existing data halls is either technically infeasible or economically prohibitive.

At the same time, power delivery systems become significantly more complex. Both total capacity and stability requirements increase. Regardless of how well engineered the cooling system may be, without sufficient electrical capacity a data center cannot support AI workloads.

Redundancy: A Silver Lining

Generative AI significantly tightens requirements for available power and cooling capacity. However, there is one area where AI simplifies life for data center engineers: redundancy.

AI workloads change the nature of availability requirements. Unlike traditional enterprise systems and services, where any downtime is unacceptable, AI workloads are often more tolerant of power disruptions. Failures in compute processes typically do not result in data loss and can be recovered relatively easily. At the massive power scales typical for AI projects, classic enterprise-grade redundancy architectures are not only technically complex but also economically inefficient.

Four Principles for Designing Data Centers for AI

No matter how much legacy data centers try to incrementally adapt power and cooling systems, this approach has its limits. The only sustainable path forward is to rethink data center design from the ground up. Power, cooling, redundancy, and data hall layout must be treated not as independent subsystems, but as a single integrated architecture.

Designing data centers for AI workloads requires several fundamental principles:

- Provision grid capacity for high-density loads from day one; retrofitting later is not a viable option.

- Maintain a power reserve, as demand will continue to grow over time.

- Build in architectural headroom, ensuring that space can accommodate and scale advanced cooling systems, including liquid cooling.

- Design infrastructure around the specific technical requirements of AI projects rather than around a one-size-fits-all architecture optimized for the strictest SLA scenarios.

All of these principles can be summarized in one idea: new data centers must be designed with strategic flexibility — in architecture, redundancy models, and project economics. This enables both optimized capital expenditure and long-term readiness for future workloads.

From Metropolitan Hubs to Energy Sources: A New Data Center Siting Logic

The emergence of AI workloads has highlighted another constraint: limited access to external power supply. Data centers in major cities are particularly exposed to this issue due to competition for electricity with other consumers and the limited ability to provision new power lines.

As a result, future data centers are likely to be built not at the “crossroads” of network connectivity, but closer to sources of power — nuclear, thermal, or hydroelectric generation.

As an alternative to relocation, some operators are also exploring on-site power generation. Global hyperscalers such as Amazon, Google, and Microsoft are already working in this direction. A more immediately realistic scenario for many operators is on-site gas generation.

AI and the Data Center Market: Big Narratives, Modest Reality

News coverage suggests that generative AI is radically transforming the data center market. In practice, actual demand tells a more restrained story. Many enterprises are still in a wait-and-see mode, observing early implementations. High equipment costs and a lack of operational experience with AI remain significant barriers.

Artificial intelligence has not yet become a mass-market customer segment for commercial data centers in Russia. There is no surge of demand for high-density racks dedicated to machine learning workloads at this stage.

According to research by Uptime Institute, while rack power density continues to increase, the average remains in the 10–30 kW range. Only a limited number of projects operate racks above 30 kW, and “extreme” densities are still relatively rare.

AI is undoubtedly stimulating demand in the data center market, but prices are rising even without it. This is an объективный structural trend driven by capacity shortages, rising construction costs, higher engineering complexity, and increasing grid connection costs. Generative AI acts as a catalyst for demand, not its root cause.

The Outlook for AI in Data Centers

The absence of mass AI workloads in commercial data centers does not mean that AI itself is absent. Major technology players such as Yandex and Sber already deliver AI-powered services to end users, but their supporting infrastructure remains largely in-house. As user bases and compute demands grow, corporate infrastructure will inevitably reach scaling limits. Building and operating high-density AI infrastructure is not a core business for companies whose primary model is digital services — it is only a matter of time before this capacity moves to commercial data centers. Data centers must be prepared for this scenario, even if current demand for high-density racks remains limited.

Preparing infrastructure for AI is not just another evolutionary step in data center design and operations. It represents a paradigm shift that the market is only beginning to adapt to. The question is not whether demand will materialize, but when it will become commercially significant.